Researchers Develop Search and Rescue Technology That Sees Through Forest with Thermal Imaging

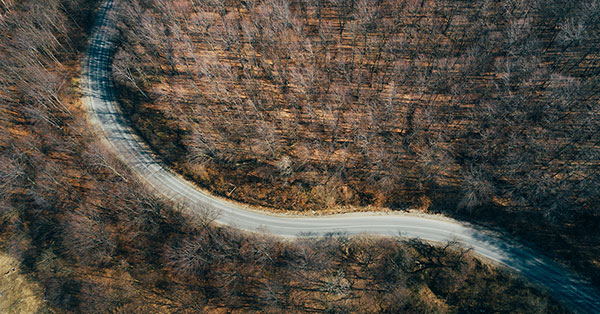

Finding a hiker lost in the woods is a challenging mission—if the person is lost in densely forested terrain, then sunlight is largely blocked by trees and other vegetation, and the ground reflects very little light. Because of this, thermal imaging is often used to help spot warm human bodies in a forested environment. But even thermal imaging can’t see through trees, making rescue missions a difficult task even when first responders are aided by a thermal-equipped drone or helicopter.

Luckily, we might soon see AI-guided thermal imaging drones that can “see through” the forest canopy and visualize heat signatures on the ground below.

A team of researchers at Johannes Kepler University in Austria released a paper published in Nature Machine Intelligence that describes a technique called airborne optical sectioning (AOS), which allows an aerial imager to see through occlusions like a forest canopy. The team, headed by Professor Oliver Bimber, achieved an accurate classification rate of over 90% using a drone equipped with a FLIR Vue Pro thermal imaging camera and a machine learning algorithm trained to identify humans below.

Airborne Optical Sectioning - Seeing Through Forest

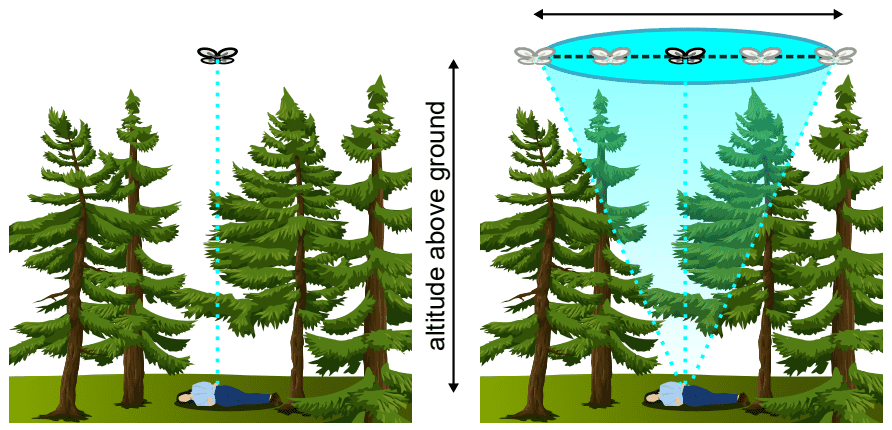

“The key technique that enables this is called airborne optical sectioning,” explains Bimber. “It's a synthetic aperture imaging technique that basically captures many images over a larger area that are computationally combined to produce integral images that allow us to see through forest.” By synthetically focusing on the ground below and combining multiple images into one integral image, everything that’s not in the focal plane—like trees—disappears, and what’s left is an amalgamation of images of the forest floor.

In contrast to recording and analyzing single images, AOS combines multiple images, and the resulting integral image is analyzed. (Schedl, 2020)

Using digital elevation models, which are available online for nearly every place on earth, the drone can fly overhead and know the position of the ground below. “For every point on the ground, we simply compute where the spec projects into the view of all of these recorded cameras and integrate them,” Bimber says.

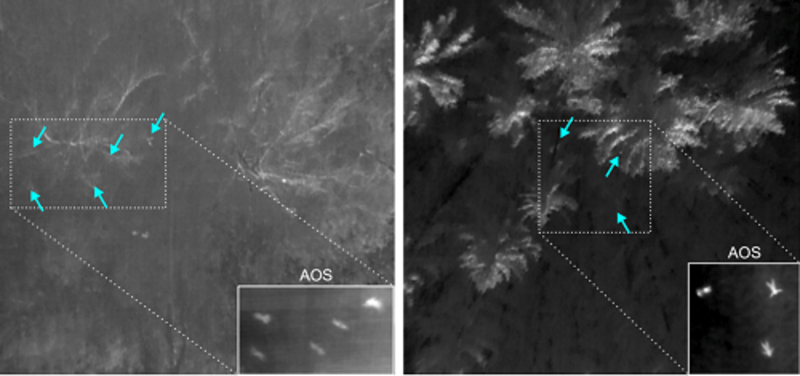

The results are impressive—where a drone with a thermal imaging camera might detect bits and pieces of a human heat signature, AOS reveals an entire human shape that can be classified by a trained AI.

The arrows indicate partial heat signals of occluded people on the ground. The insets show AOS results that are achieved when multiple thermal images are integrated. (Schedl, 2020)

Training the Artificial Intelligence

While thermal imaging is literally a lifesaver during search and rescue missions, it does have limitations, especially if operators are trying to take advantage of AI detection capabilities. “A deep learning technique—when trying to classify that these things are people—would have difficulties,” says Bimber, “because you get very similar heat signals by just patches on the ground that are heated up, or of branches that are heated up.” With an integral image cleared of occlusions, the AI can easily be trained to detect human figures.

FLIR has an extensive thermal dataset for training autonomous vehicles, but Bimber’s team found that they needed to create an aerial dataset to train their neural network to identify people from above. “We spent basically a year or so recording our own data sets that we could use for training,” Bimber says. Their data set included images of people from above in a variety of poses, and took into account the size of people relative to the typical flight distance of a drone.

Source: Search and Rescue with Airborne Optical Sectioning (www.youtube.com/watch?v=kyKVQYG-j7U)

The team expected to have to record images under a variety of different conditions, including different types of forests and during different seasons. However, they found that to be unnecessary. “The occlusion remover works so well that the occlusion becomes invariant. So it really doesn't matter if you compute these integral images from single images that you recorded in forest or in an open field,” explains Bimber. “Because of that, we could record the training data set under control conditions in an open field.”

Wildlife Applications, Archeology, and More

Airborne optical sectioning doesn’t only have its uses in search and rescue, but also has applications in fields like archeology, wildlife observation, and forestry agriculture. The flexibility of the technology is a major factor in its versatility, as Bimber explains it can be applied to any kind of wavelength, not just infrared, and any kind of aircraft.

“It's really low cost because we don't deal with special sensors,” he adds. “You just can use ordinary cameras.”

For archeology projects, for example, the team works in RBG to remove obstructions and make architectural structures visible, while agriculture applications usually take advantage of near-infrared imaging.

Wildlife observation is also benefited by thermal imaging and AOS, and the team has helped researchers to track animal populations. “There are lots of people interested in finding out how large populations are, and how populations move and change over time and in size,” Bimber explains. Last year the team worked on a project to count the largest heron population in Europe, which nests annually in a wetland region on the border between Germany and Austria.

Source: Airborne Optical Sectioning for Nesting Observation (www.youtube.com/watch?v=81l-Y6rVznI)

Ornithologists come every year to count the heron population using drones—but many of the birds and their nests are concealed below the tree crowns. With airborne optical sectioning, Bimber’s team was able to easily discern the herons through the forest. “That was the first time that the ornithologists actually could count the population,” he says.

The Future of Search & Rescue

For future testing, the team is looking to upgrade to a drone that can fly for a longer period of time (up to several hours), and a thermal camera like the FLIR Boson that includes an SDK to process the images in real-time. Their goal is to have an autonomous drone that downloads thermal images in real time, processes the images, and then computes where to fly next. If it sees something that might be a person, it will investigate further, finding missing people as quickly and as reliably as possible. It will also be capable of communicating with the rescue team and sending its results to their mobile devices.

Moving all the required computation out of a lab and onto a mobile payload is an extra challenge, but one the team is confident they can soon achieve. Though to the human eye the integral images created in real-time on a drone don’t have the crispness of those combined later in the lab by high-end computers, the onboard AI is still able to easily classify human forms.

“We hope to do this in the next five years,” Bimber says. “The technology is ready, it just has to be integrated.” Hopefully we’ll soon see hikers and missing persons rescued in record time thanks to the new technology.

References:

Schedl, D.C., Kurmi, I. & Bimber, O. Search and rescue with airborne optical sectioning. Nat Mach Intell 2, 783–790 (2020). https://doi.org/10.1038/s42256-020-00261-3