Comparing Sensitivity of Thermal Imaging Camera Modules

Beyond resolution, thermal camera integrators must consider thermal sensitivity

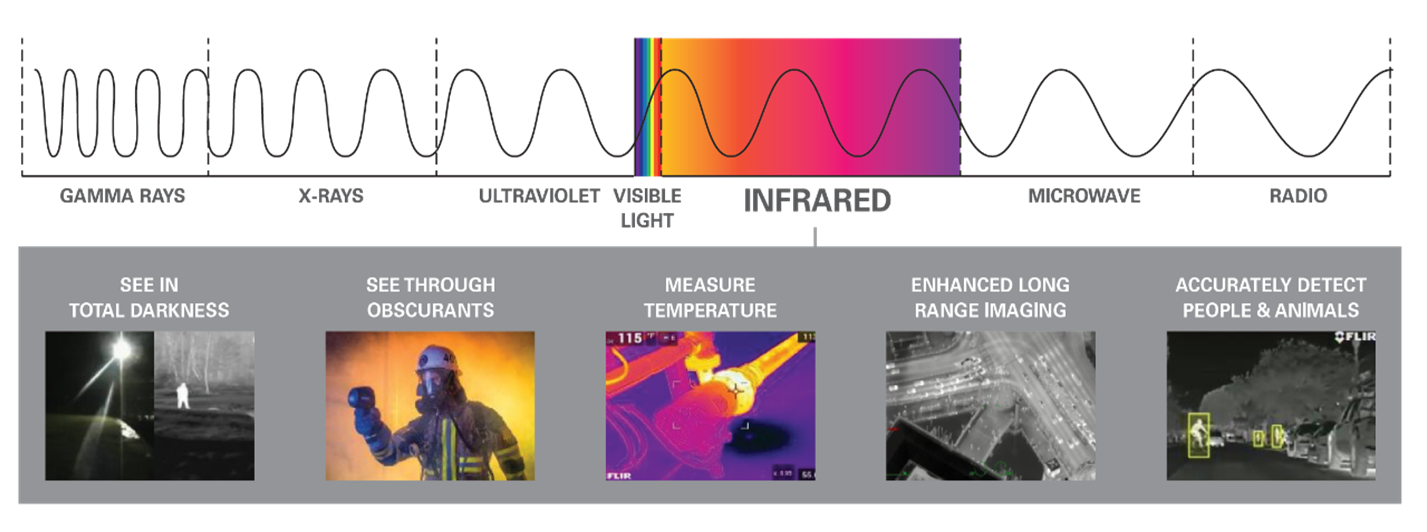

Thermal imagers make pictures from heat, also called infrared (IR) or thermal energy. They capture IR energy and use that energy to create images through digital or analog video outputs, with the details defined by differences in temperature. Heat is a separate part of the electromagnetic spectrum versus typical visible light. A camera that can detect visible light won’t see thermal energy, and vice versa.

Infrared camera detectors are made of an array of individual detector elements. Because the wavelengths of energy in the IR spectrum are longer than those of visible light, each IR detector element has to be correspondingly larger than elements on visible light detectors in order to absorb the larger wavelength. As a result, a thermal camera usually has lower resolution (fewer pixels) than a visible light sensor of the same mechanical size.

Figure 1: The electromagnetic spectrum includes the infrared waveband ranges from 0.75 µm in the near-infrared to nearly 1 mm (1,000 µm) in the far infrared

Originally developed for surveillance and military operations, thermal cameras are now widely used for industrial applications such as building inspections (e.g., moisture, insulation, roof, etc.), firefighting, autonomous vehicles, automatic emergency braking (AEB) systems, industrial inspections, scientific research, and much more. These cameras come in a variety of form factors, from handheld cameras to unmanned drones, to scientific instruments sent into outer space.

Engineers developing products or systems incorporating thermal cameras need to have a clear understanding of the key design specifications including scene dynamic range, field of view, resolution, sensitivity, and spectral range, to name a few. Different cameras can excel at different things, so engineers need to understand the tradeoffs between different types of thermal camera modules and the impact those differences will have on the final product performance.

Sensitivity: A key variable that affects thermal imaging clarity and utility

Figure 2: Thermal sensitivity is a key performance metric for low-contrast scenes including foggy weather

One important specification that is often overlooked at the expense of resolution, is the thermal sensitivity, the specification that defines the smallest temperature difference a camera can detect. A thermal camera’s sensitivity will have a direct impact on the image clarity and sharpness that camera can produce. Thermal devices measure sensitivity in milliKelvins (mK). The lower the number, the more sensitive the detector. Thermal sensitivity, also referred to as Noise Equivalent Temperature Difference (NETD), describes the smallest temperature difference observed when using a thermal device. In effect, the lower the NETD value, the better the sensor will be at detecting small temperature differences. Integrators and developers should look for manufacturers that can provide NETD performance at the industry-standard 30 °C. The table below can be used to generally rate the sensitivity of a thermal detector.

Table 1. Thermal sensor sensitivity range and description

|

|

Sensitivity (mK) |

Description |

|

<30 | Excellent |

| 40-49 | Great | |

| 50-59 | Good | |

| 60-69 | Acceptable | |

| 70-80 | Satisfactory |

Increased sensitivity makes thermal imagers more effective at seeing smaller temperature differences, which is especially important in scenes with low thermal contrast and when operating in challenging environmental conditions like fog, smoke, and dust. Selecting a more entry-level, essentially lower cost thermal camera, that features “acceptable” to “satisfactory” thermal sensitivity results in an end product that offers low contrast scenes resulting in poorer image quality, reduced detection range, and limited situational awareness compared to cameras with greater sensitivity. Devices with better thermal sensitivity are ideal for a wide variety of uses from search and rescue to industrial inspection to security.

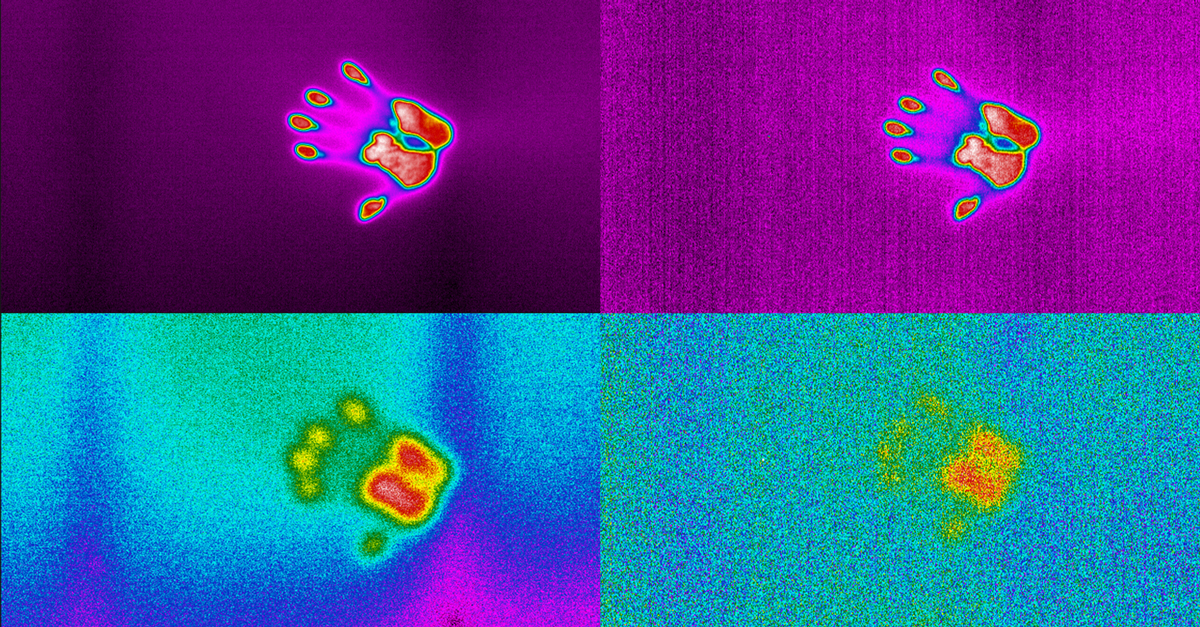

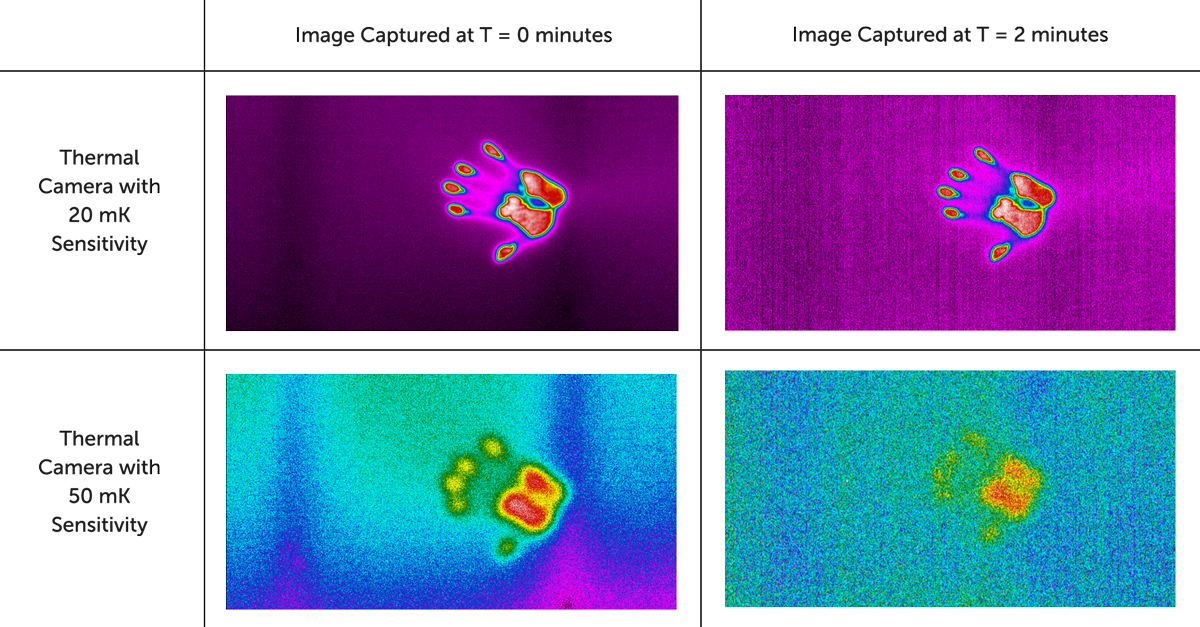

Figure 3: 20 mK versus 50 mK sensitive thermal sensors of a handprint at time zero and two minutes

Cooled or Uncooled?

IR imaging cameras with a cooled detector provide distinct advantages versus thermal imaging cameras with an uncooled detector. A cooled thermal imaging camera has an imaging sensor that is integrated with a cryocooler, which lowers the sensor temperature to cryogenic temperatures. This reduction in sensor temperature is necessary to reduce thermally-induced noise to a level below that of the signal from the scene being imaged and can result in significantly improved thermal sensitivity.

However, these improvements in performance come at a cost. Cooled IR cameras are generally larger, heavier, and more power hungry. In addition to sacrificing SWaP (size, weight and power), cooled cameras are significantly more expensive and subject to mechanical wear and tear that reduce the mean time to failure (MTTF) of the camera, as cryocoolers have moving parts with extremely tight mechanical tolerances that degrade over time, as well as helium gas that can slowly leak through seals.

Recent improvements in uncooled thermal sensors have brought sensitivity to better than 20 mK – a drastic improvement in sensitivity versus legacy systems, potentially making uncooled LWIR cameras a viable option for a wide variety of new applications. Although tempting, it is important to note that uncooled IR thermal cameras cannot simply replace cooled thermal cameras. Product developers and system integrators need to consider additional requirements regarding imaging speed, spatial resolution, spectral filtering, and more.

Figure 4-7: Application images captured with the Boson+

(Left image: Consumer Electronics Inspection, Right Image: Home Inspection)

(Left image: Public Surveillance, Right Image: Coastal Surveillance)

For more information on thermal sensitivity, or to speak with an expert, please see www.flir.com/bosonfamily.